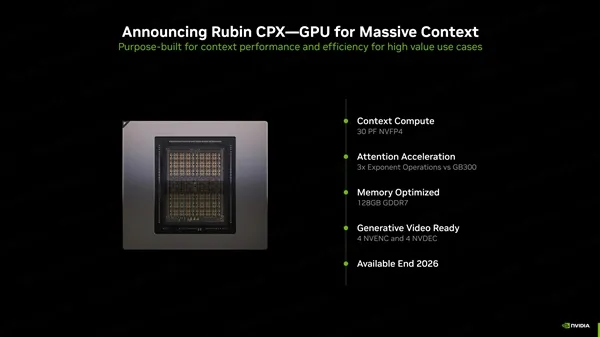

NVIDIA Rubin CPX GPU: 128GB VRAM for AI Inference

NVIDIA has unveiled the Rubin CPX GPU, a next-generation AI accelerator featuring a massive 128GB of GDDR7 VRAM. Built on the upcoming Rubin architecture, this GPU is purpose-built for long-context inference and agent-based AI workloads, marking a shift away from traditional GPU design priorities.

Although currently a paper launch, the Rubin CPX is expected to debut commercially in late 2026.

🚀 Key Specs of the Rubin CPX GPU #

The Rubin CPX introduces several notable advancements tailored for AI inference:

- 128GB GDDR7 VRAM for ultra-large model support

- NVFP4 precision delivering up to 30 PFlops of compute

- Support for millions of tokens in long-context inference

- 3× faster attention performance vs. GB300 NVL72

- 4 NVENC + 4 NVDEC engines for media acceleration

Unlike previous generations, the Rubin CPX is optimized specifically for AI inference at scale, rather than general-purpose compute or gaming.

🧠 Rubin Architecture and Vera CPU Platform #

NVIDIA confirmed that both the Rubin GPU and its companion Vera CPU have successfully taped out at TSMC, signaling strong progress toward production readiness.

The Rubin platform includes:

- Rubin GPU (successor to Blackwell)

- Vera CPU (next-gen data center processor)

- CX9 Super NIC for ultra-fast networking

- NVLink144 / Spectrum-X switches

- Silicon photonics integration

Key architectural highlights:

- Built on TSMC 3nm EUV process

- Uses HBM4 (8-stack) memory in standard variants

- Future Rubin Ultra (12-stack HBM4) planned for 2027

- 6th-gen NVLink delivering 3.6 TB/s bandwidth

- Up to 1.6 Tbps networking throughput

Together, Rubin and Vera form a tightly integrated AI superchip ecosystem designed for hyperscale deployments.

🏢 Next-Gen AI Servers: Vera Rubin NVL144 #

NVIDIA also introduced a new class of AI infrastructure designed to scale Rubin GPUs across entire data center racks.

Vera Rubin NVL144 #

- 36 Vera CPUs + 144 Rubin GPUs

- 1.4 PB/s HBM4 bandwidth

- Up to 75TB storage capacity

- Delivers 3.5 EFlops (NVFP4)

- ~3.3× faster than GB300 NVL72

Vera Rubin NVL144 CPX #

- Adds 72 Rubin CPX GPUs

- Total: 144 GPUs + 36 CPUs per rack

- 1.7 PB/s memory bandwidth

- 100TB high-speed storage

- Supports InfiniBand (Quantum-X800) or Spectrum-X Ethernet

- Peak performance: 8 EFlops (~7.5× boost)

NVIDIA estimates that deployments of these systems could yield massive ROI, potentially turning $100M investments into $5B returns in AI-driven enterprises.

⚔️ AMD’s Response: The MI450 GPU #

NVIDIA’s dominance is being challenged by AMD’s upcoming MI450 GPU, which aims to compete directly with both Blackwell and Rubin architectures.

Key highlights of the MI450:

- Designed for training, inference, and distributed AI workloads

- Built on a unified UDNA architecture

- Positioned as AMD’s “EPYC moment” for AI

- Promises industry-leading performance claims

If AMD delivers on these ambitions, the MI450 could significantly reshape the competitive landscape in AI accelerators.

🧩 Final Thoughts #

The next wave of AI hardware is clearly focused on inference scalability and efficiency:

- Rubin CPX introduces massive VRAM and long-context capabilities

- Rubin + Vera redefine tightly integrated AI platforms

- NVL144 servers push performance into multi-EFlop territory

- AMD MI450 sets the stage for serious competition

With Rubin launching in 2026, Rubin Ultra in 2027, and further architectures beyond, the AI hardware race is entering a new era—one defined by scale, memory, and inference efficiency.